I’ve completed a fresh install of Ubuntu on an old box that use to contain solaris. The system disk died and upon installing a new (old) drive and then installing ubuntu, I found that two of the disks still in the computer were part of a zfs raid. I’m not sure how many disks were in the raid, but I’m curious as to what was on this file system that has been shutdown in inaccessible for more than 7-8 years. (I later confirmed that most files on the system are from 2008 and earlier).

There were only 4 available sata connectors to the board. Two were used for the drives in there, it didn’t take long to find the matching drives to plug in.

Installing ZFS

I began with installing zfs by following the instructions here:

Install ZFS on Ubuntu—Server as Code

Installing SSH Server

I also had to install an SSH Server because this is on a box located remotely. Follow any generic install of Open SSH Server. The one I used was SSH/OpenSSH/Configuring.

Because my server is behind a firewall and not publicly accessible, I haven’t worried too much about logon via SSH Keys. I have done it before, but this box is only going to be a temporary install, but I do recommend you do that. A couple of other related tutorials to passwordless ssh key access to servers:

https://help.ubuntu.com/community/SSH/OpenSSH/Keys << a good resource, includes troubleshooting

http://www.mccarroll.net/blog/rpi_cluster2/index.html

https://www.howtoforge.com/tutorial/ssh-and-scp-with-public-key-authentication/

https://www.raspberrypi.org/documentation/remote-access/ssh/passwordless.md

Installing Samba

I also installed samba, following these instructions How to Create a Network Share Via Samba Via CLI (Command-line interface/Linux Terminal) – Uncomplicated, Simple and Brief Way!

With SSH, Samba and the ZFS modules installed, configured and running… let’s try and rebuild this raid :)

Let’s have a look!

disks:

lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

sda 8:0 0 111.8G 0 disk

├─sda1 8:1 0 243M 0 part /boot

├─sda2 8:2 0 1K 0 part

└─sda5 8:5 0 111.6G 0 part

├─ubuntu--vg-root (dm-0) 252:0 0 110.6G 0 lvm /

└─ubuntu--vg-swap_1 (dm-1) 252:1 0 1016M 0 lvm [SWAP]

sdb 8:16 0 698.7G 0 disk

├─sdb1 8:17 0 698.6G 0 part

└─sdb9 8:25 0 8M 0 part

sdc 8:32 0 698.7G 0 disk

├─sdc1 8:33 0 698.6G 0 part

└─sdc9 8:41 0 8M 0 part

sdd 8:48 0 698.7G 0 disk

├─sdd1 8:49 0 698.6G 0 part

└─sdd9 8:57 0 8M 0 part

sde 8:64 0 698.7G 0 disk

├─sde1 8:65 0 698.6G 0 part

└─sde9 8:73 0 8M 0 part

sr0 11:0 1 1024M 0 rom

and for pools specifically:

$ sudo zpool import

pool: solaraid

id: 10786192747791980338

state: ONLINE

status: The pool is formatted using a legacy on-disk version.

action: The pool can be imported using its name or numeric identifier, though

some features will not be available without an explicit 'zpool upgrade'.

config:

solaraid ONLINE

raidz1-0 ONLINE

ata-WDC_WD7500AACS-00C7B0_WD-WCASN ONLINE

ata-WDC_WD7500AAKS-00RBA0_WD-WCAPT ONLINE

ata-WDC_WD7500AAKS-00RBA0_WD-WCAPT ONLINE

ata-WDC_WD7500AAKS-00RBA0_WD-WCAPT ONLINE

Ok, this PC only had 4x SATA drives and it appears I’ve found the correct drives. Things are looking good from the start.

Let’s do it!

:~$ sudo zpool import solaraid

:~$ sudo zpool status

pool: solaraid

state: ONLINE

status: One or more devices is currently being resilvered. The pool will

continue to function, possibly in a degraded state.

action: Wait for the resilver to complete.

scan: resilver in progress since Wed Nov 11 21:37:59 2015

11.5M scanned out of 1.63T at 1.15M/s, 412h58m to go

2.60M resilvered, 0.00% done

config:

NAME STATE READ WRITE CKSUM

solaraid ONLINE 0 0 0

raidz1-0 ONLINE 0 0 0

ata-WDC_WD7500AACS-00C7B0_WD-WCASN ONLINE 0 0 0

ata-WDC_WD7500AAKS-00RBA0_WD-WCAPT ONLINE 0 0 0

ata-WDC_WD7500AAKS-00RBA0_WD-WCAPT ONLINE 0 0 0

ata-WDC_WD7500AAKS-00RBA0_WD-WCAPT ONLINE 0 0 2 (resilvering)

errors: No known data errors

YOUCH! 412HOURS… That’s 17 days! I gave it a couple of seconds to stabilise and ran it again, and came up with an error:

:~$ sudo zpool status

pool: solaraid

state: ONLINE

status: One or more devices has experienced an unrecoverable error. An

attempt was made to correct the error. Applications are unaffected.

action: Determine if the device needs to be replaced, and clear the errors

using 'zpool clear' or replace the device with 'zpool replace'.

see: http://zfsonlinux.org/msg/ZFS-8000-9P

scan: resilvered 2.60M in 0h0m with 0 errors on Wed Nov 11 21:38:24 2015

config:

NAME STATE READ WRITE CKSUM

solaraid ONLINE 0 0 0

raidz1-0 ONLINE 0 0 0

ata-WDC_WD7500AACS-00C7B0_WD-WCASN ONLINE 0 0 0

ata-WDC_WD7500AAKS-00RBA0_WD-WCAPT ONLINE 0 0 0

ata-WDC_WD7500AAKS-00RBA0_WD-WCAPT ONLINE 0 0 0

ata-WDC_WD7500AAKS-00RBA0_WD-WCAPT ONLINE 0 0 2

errors: No known data errors

Reboot and Ubuntu bootup fail

(long winded fluff about nothing, you can scroll down to “Resilvering” if you’d like to skip this)

I do recall I had issues with this raid, I could certainly go the route of upgrading it first and trying it again, but once I did a

tree -L 2 /solaraid/

and seeing things I had long thought were gone, I’m going to back this up first :)

The only problem is, the raid is installed in a box with only a 10/100 network card :(

I’ll let it run overnight taking off only what I need, and see how we go. This has been a good find

At this point I was operating in the house and the server is located off-site. I had several ssh/terminal windows open to the box and as I was working away I kept getting the message to reboot the system. I issued the relevant reboot command and set off a ping to tell me when it came back online… It didn’t come back online.

I went to the server and found it was still booting. This was after more than half an hour and eventually it gave up and crashed and restarted again.

For the next couple of hours I could not get the drive to boot and I was blaming the old boot drive, but after eventually getting into the “Try Ubuntu” mode from the DVD I found that one of the drives were not being reported in the system. Another was coming up as totally unknown and two were seen as part of a set. It took several hours to get to the bottom of it. Eventually thru the BIOS I could see one of the drives weren’t being detected.

A couple of sata cable changes and swapping power cables around and I was back in business.

Resilvering

:~$ sudo zpool status

pool: solaraid

state: ONLINE

status: One or more devices has experienced an unrecoverable error. An

attempt was made to correct the error. Applications are unaffected.

action: Determine if the device needs to be replaced, and clear the errors

using 'zpool clear' or replace the device with 'zpool replace'.

see: http://zfsonlinux.org/msg/ZFS-8000-9P

scan: scrub in progress since Thu Nov 12 03:12:54 2015

1.73G scanned out of 1.63T at 32.3M/s, 14h40m to go

12.5K repaired, 0.10% done

config:

NAME STATE READ WRITE CKSUM

solaraid ONLINE 0 0 0

raidz1-0 ONLINE 0 0 0

ata-WDC_WD7500AACS-00C7B0_WD-WCASN ONLINE 0 0 0

ata-WDC_WD7500AAKS-00RBA0_WD-WCAPT ONLINE 0 0 0

ata-WDC_WD7500AAKS-00RBA0_WD-WCAPT ONLINE 0 0 13 (repairing)

ata-WDC_WD7500AAKS-00RBA0_WD-WCAPT ONLINE 0 0 0

errors: No known data errors

Over the next few minutes I kept polling the status and it was picking up speed.

scan: scrub in progress since Thu Nov 12 03:12:54 2015

24.3G scanned out of 1.63T at 84.5M/s, 5h31m to go

12.5K repaired, 1.46% done

scan: scrub in progress since Thu Nov 12 03:12:54 2015

32.1G scanned out of 1.63T at 97.0M/s, 4h47m to go

12.5K repaired, 1.93% done

scan: scrub in progress since Thu Nov 12 03:12:54 2015

56.9G scanned out of 1.63T at 102M/s, 4h29m to go

12.5K repaired, 3.42% done

scan: scrub in progress since Thu Nov 12 03:12:54 2015

184G scanned out of 1.63T at 137M/s, 3h4m to go

184K repaired, 11.01% done

It’s starting to slow down again, and we’re seeing more errors!

scan: scrub in progress since Thu Nov 12 03:12:54 2015

195G scanned out of 1.63T at 93.6M/s, 4h28m to go

354K repaired, 11.68% done

The next day and something had gone wrong. I’m still unsure what happened, but the whole `solaraid` drive became unresponsive.. Where it had got to the evening(/morning before) at 195GB in the resilvering is where it was when I checked it later today. And the drive was otherwise not responding. I remotely tried to reboot and again it hanged.

At this present time, I’m still putting it down to hardware, but it’s really unknown what’s at the root of the problem.

It’s been back up and running for a while now and it’s present status is:

scan: scrub in progress since Thu Nov 12 03:12:54 2015

1.41T scanned out of 1.63T at 160M/s, 0h23m to go

1.23M repaired, 86.89% done

config:

NAME STATE READ WRITE CKSUM

solaraid ONLINE 0 0 0

raidz1-0 ONLINE 0 0 0

ata-WDC_WD7500AACS-00C7B0_WD-WCASN ONLINE 0 0 0

ata-WDC_WD7500AAKS-00RBA0_WD-WCAPT ONLINE 0 0 0

ata-WDC_WD7500AAKS-00RBA0_WD-WCAPT ONLINE 0 0 251 (repairing)

ata-WDC_WD7500AAKS-00RBA0_WD-WCAPT ONLINE 0 0 6 (repairing)

errors: No known data errors

When I captured the above, I hadn’t realised the process was almost finished until I pasted and read over it.

scan: scrub in progress since Thu Nov 12 03:12:54 2015

1.62T scanned out of 1.63T at 158M/s, 0h0m to go

1.29M repaired, 99.46% done

As I write this the process has been running for exactly 24 hours. We have 0.5% left.

The final capture:

:/solaraid$ sudo zpool status

pool: solaraid

state: ONLINE

status: One or more devices has experienced an unrecoverable error. An

attempt was made to correct the error. Applications are unaffected.

action: Determine if the device needs to be replaced, and clear the errors

using 'zpool clear' or replace the device with 'zpool replace'.

see: http://zfsonlinux.org/msg/ZFS-8000-9P

scan: scrub repaired 1.29M in 12h0m with 0 errors on Thu Nov 12 15:13:05 2015

config:

NAME STATE READ WRITE CKSUM

solaraid ONLINE 0 0 0

raidz1-0 ONLINE 0 0 0

ata-WDC_WD7500AACS-00C7B0_WD-WCASN ONLINE 0 0 0

ata-WDC_WD7500AAKS-00RBA0_WD-WCAPT ONLINE 0 0 0

ata-WDC_WD7500AAKS-00RBA0_WD-WCAPT ONLINE 0 0 291

ata-WDC_WD7500AAKS-00RBA0_WD-WCAPT ONLINE 0 0 12

errors: No known data errors

The resilvering has checked every checksum in 1.6TB of data and repaired 1.29MB. The process took exactly 12 hours (with a reboot thrown in there JUST to push the boundaries a little bit).

Next we’re to get any data off that we want… Let’s grab some directory information

To be continued…

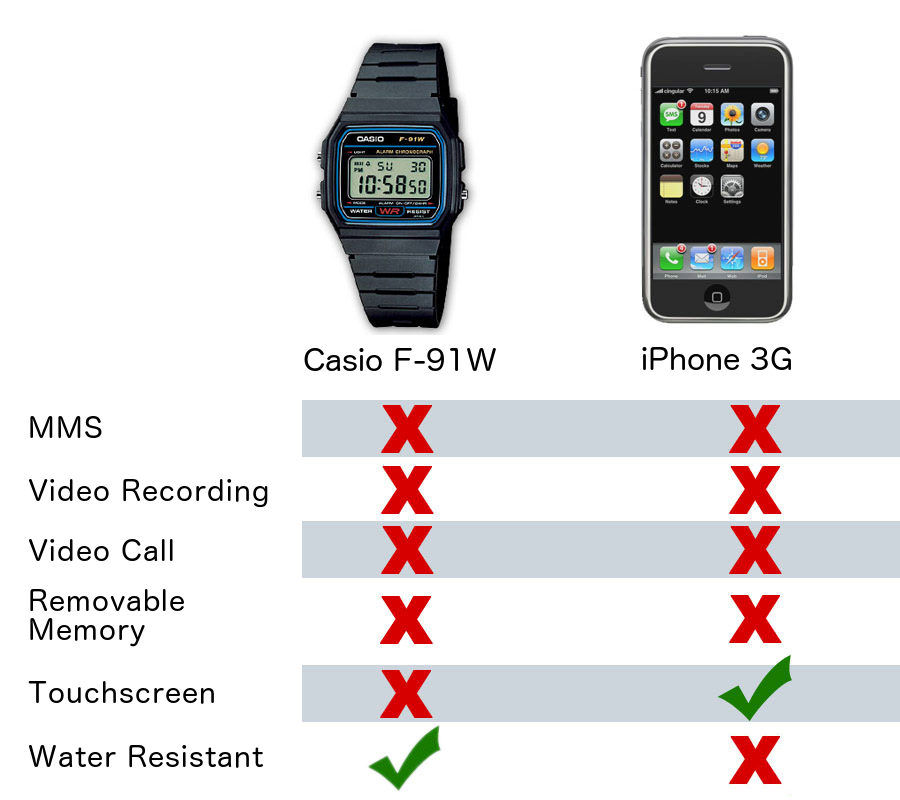

I had one of these watches!

I had one of these watches!